|

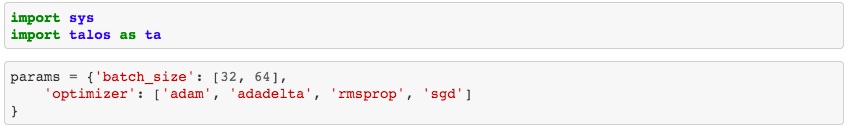

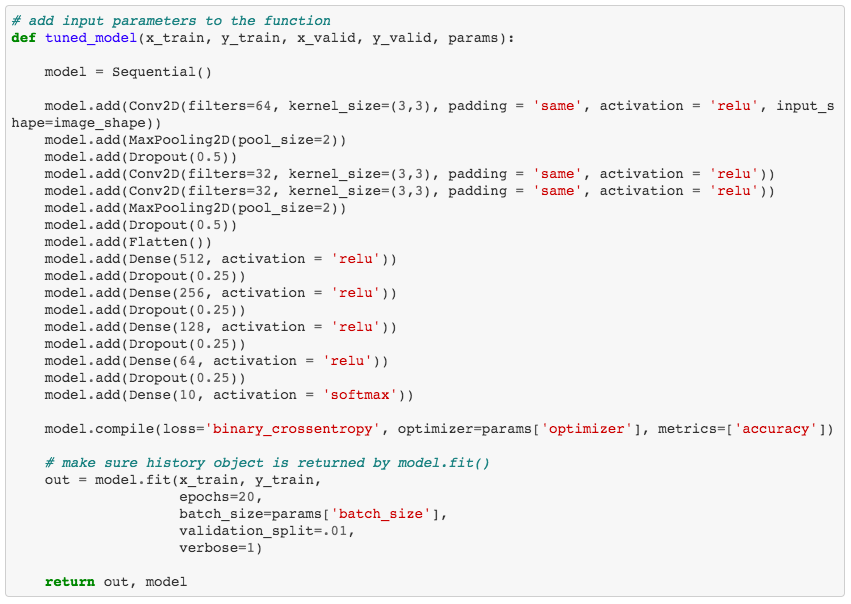

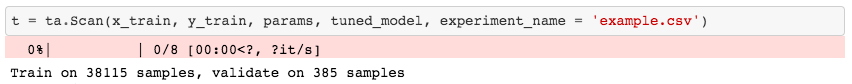

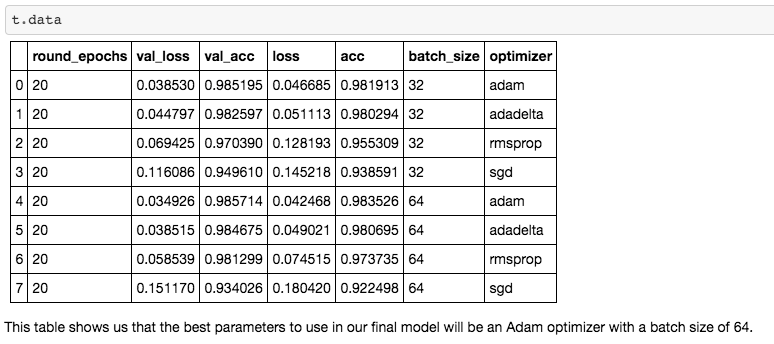

Now that you have gone through all the work of obtaining, scrubbing, exploring, and modeling your data with a neural network, how do you know it is tuned to produce the best results? Hyperparameter tuning is a necessary step in the iteration process of model creation. Since running a neural network model can be so computationally expensive, or time consuming, manually tuning different hyperparameters or running the model multiple times with different optimizers can be tough. With Talos, a few lines of code will allow for automated tuning while you go about doing other things. Grid Search Grid Search* is a technique that finds the optimal hyperparameters of a model by running the model with unique combinations of hyperparameters in order to make the most accurate predictions. * For more on GridSearch see the link in the Further Reading section below. Talos According to it's GitHub page, "Talos radically changes the ordinary Keras workflow by fully automating hyperparameter tuning and model evaluation. Talos exposes Keras functionality entirely and there is no new syntax or templates to learn." Essentially, Talos provides a template for your hyperparameter tuning needs. A long list of key features can be found on the Talos ReadMe page. But here, we will be working with GridSearch. In this case, I keep it simple with only 2 parameters being optimized: - batch_size: determines the number of samples that will be propagated through the network. - optimizer*: mathematical functions, each with different internal parameters that help minimize loss during the model's training process. * For more on optimization algorithms see the link in the Further Reading section below. Step 1. - Import Talos and set up your parameters for GridSearch. Here you can see that I've only chosen to test 4 different optimizers along with 2 different batch sizes. So really, all I'm trying to figure out is what batch size and optimizer combination works best? Step 2. - Create a function that will place your GridSearch parameters into the model. Step 3. - Use the function to run the model with GridSearch. Warning!: This will take a while. Seriously, like, a while. It would be best to run this at night before going to sleep or across multiple systems. If you are running this on a personal computer, be aware your computer will be out of commission for some time. Step 4. - Interpret your results. This is a nice aspect of Talos. After running your model, which took forever (I tried to warn you!), you are able to quickly access all of your results. What you see here are the performance metrics for each combination of hyperparameters. In the case of this model, a batch size of 64 with an Adam optimization algorithm works the best. Hopefully, you will be able to use the Talos optimization platform with Keras to better optimize your neural networks. For background knowledge on GridSearch, Hyperparameter Tuning and Optimization Algorithms, I suggest reading more from the excellent Towards Data Science articles listed below. Further Reading

Grid Search for model tuning by Rohan Joseph, Towards Data Science. Simple Guide to Hyperparameter Tuning in Neural Networks by Matthew Stewart, Towards Data Science. Types of Optimization Algorithms used in Neural Networks and Ways to Optimize Gradient Descent by Anish Singh Walia, Towards Data Science.

0 Comments

|

RSS Feed

RSS Feed